How Marketers Misuse Data

This summer, I ran the Peachtree Road Race 10k in 1:03:55.

Some of you may think I am an occasional runner. Others, that I was just doing the race for fun without concern for time. But what conclusions can you actually draw from this?

None — because you didn’t know about external factors. You didn’t know that I started in Wave U and had to weave through crowds of walkers. You didn’t know that it was 95 degrees already when the race started at 9 a.m., and that the humidity warning was high. You didn’t know I had taken an energy gel right before the race, skipped the beer at the Mellow Mushroom tent, and seriously needed new running shoes. You didn’t know I ran a marathon a year ago in 3:55:46.

You didn’t know any of this information. So with only one piece of data — my race time — you actually don’t get a full picture of my race or my capabilities.

This all may seem obvious, but for some reason, when you place this concept in a business setting, it’s immediately forgotten. Marketers LOVE data. We crave it. Go to any marketing conference and you’ll hear at least one talk about the need to track and report.

And it makes sense, right? Our clients want to see numbers and results. How many people visited our website this month? How many conversions did we have? Is that number higher than last month?

We set up dashboards and reports just so clients can see these metrics daily. We obsess over analytics and what the numbers mean.

But here’s the thing: those numbers don’t really mean anything. Because looking at data alone just doesn’t work.

Data Bias in Marketing

Marketers and clients are under constant pressure to perform well. We want to show our marketing efforts are paying off, and our clients want to show their bosses they chose the right agency partner. This means a lot is on the line for everyone involved.

Unfortunately, this leads to data bias. We get so caught up in trying to prove positive results that we subconsciously position data the way we desire. Perhaps we’re looking at too small of a timeframe or data sample. Or maybe we’re looking at one campaign or digital channel in isolation. Either way, we’re not getting the big picture. We get caught up in a vicious cycle that can include any of the following forms of bias:

- Confirmation Bias — a tendency to search for or interpret information in a way that proves a hypothesis, assumption, or opinion, leading to statistical errors. For example, when running a CRO test between landing page Version A (original design) and landing page Version B (new design), it may be tempting to call the test as done when it’s only at an 85% confidence level if Version B (the newly designed, way better than original landing page) is winning, when in fact Version A would have won if the test had run to a higher confidence level.

- Selection Bias — when data is selected subjectively rather than objectively, or when non-random data has been selected. For example, only looking at a week’s worth of data rather than a month’s worth because the results look better. Or unknowingly only including a certain type of runner in your UX test of a finish line photo website.

- Yule-Simpson Effect — a paradox in which a trend appears in different groups of data but disappears or reverses when these groups are combined. For example, City A’s paid search campaign is driving more conversions than City B’s. But once you break down the conversions being driven, you discover City B is driving more important primary conversions while City A is driving less important ones.

To be a good strategists, or even just a good marketer in general, we have to consider how outside factors are affecting the results we see for clients. We need to consider the “why” behind the numbers… the people behind the numbers.

It’s time to stop asking for numbers, and start asking for stories.

A Call for Human-Centered Data Analysis

A consumer isn’t a number or piece of data. They’re a human being who made a choice to visit a website or buy a product due to a specific set of circumstances in their life. While our marketing efforts may have contributed, it’s likely they had a whole host of influences and interests and motivations that drove them to that moment in time.

In order to provide our clients with the best information, we need perspective around data. We can’t continue to pick and choose data to justify future actions and campaigns we want to run — or to point to success. Not only is it unfair to consumers, it can be disastrous for clients when making major decisions based on narrow, possibly inaccurate data analysis. Check out these three simple things you can do to change the way you analyze your data:

- Don’t get hung up on one metric — While it’s important to identify KPIs and overall goals of a project in advance, getting hung up on the performance of one metric over another may lead to an imbalance that affects all areas of a campaign. For example, if you only focus on the number of “Book Now” button clicks and don’t take into consideration the number of “Save For Later” clicks or map address clicks, you’re not getting the full picture of campaign success.

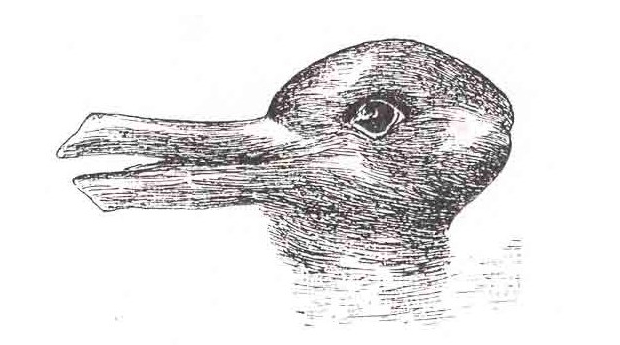

- Ask a neighbor — In a fast paced environment, this may seem like a waste of time or a doubling of efforts, but by talking to someone outside of your project or department bubble and having them give their own analysis of the situation, you can become aware of what unconscious bias you may have. It’s not your fault — once you see a problem in a certain way, it’s hard to see it in any other way. Sometimes it just takes a second opinion to push you further and get to the true diagnosis of the problem.

- Check the outside world — It’s always important to consider the larger context of the world. By seeing what others in the industry are saying, or what trends are happening outside your own campaigns, you can gain a better understanding of what’s driving results. For example, if your paid ad performance seems down MoM and YoY out of nowhere, instead of pulling your hair out wondering what changed, maybe it’s because Google suddenly got rid of their SERP right hand side ads.

It’s easy to draw false conclusions when looking at data in a vacuum. But the fact of the matter is, nothing exists on its own. By following these easy steps, you can begin to avoid jumping to the wrong conclusion and providing inaccurate data analysis, and instead focus on providing a human-centered view of all of your client’s business efforts.

Comments

Add A Comment